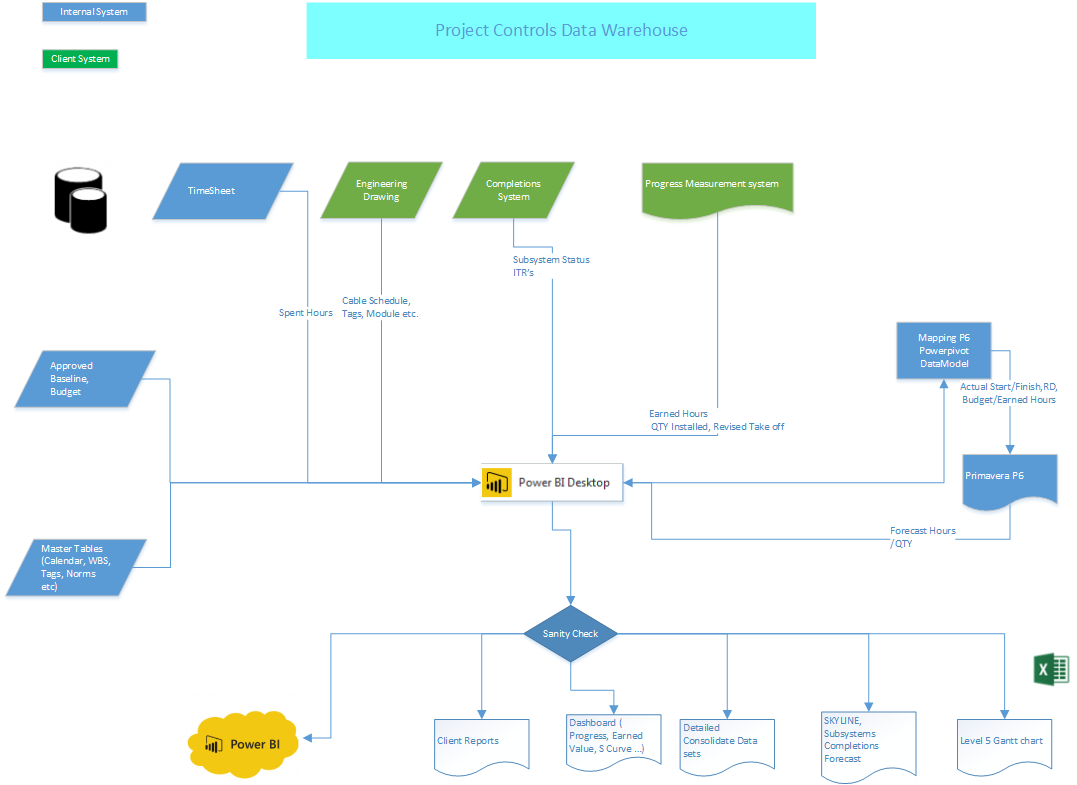

This blog post is to document my first experience with mapping in PowerBI, usually the reports we produce are time series and pivot tables, but in this case, there is a geographic dimension to the data, so I thought it is a good opportunity to try map chart in PowerBI, it turn out, it is a bit difficult than I thought.

So the data is in the thousand of piles, a lot of piles in a huge area, my first attempt was just to load the data in PowerBI and view it in a map, for some reason, PowerBI show an empty map.

PowerBI expect the data to be in latitude and longitude format, my the data is using easting and northing, I had to convert it, there are plenty of online converter, but there are not practical, as they don’t support batch processing, converting point by point is not an option.

After some googling, I find this excellent open source software QGIS, it was very straightforward, the software automatically convert the coordinates reference system (I think the technical term is reprojection), my data is GDA94 / MGA zone 55 and the result should be in WGS 84.

Voila the data is ready for PowerBI,

That’s a bit disappointing, PowerBI complain it cannot show all the points ( PowerBI has a maximum of 3500 points per chart).As a comparison, this is how Tableau show the data

Tableau doesn’t have a limitation on the number of points.

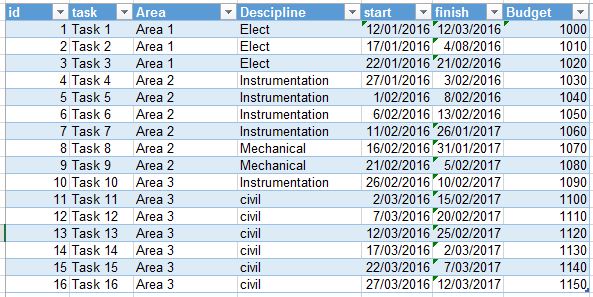

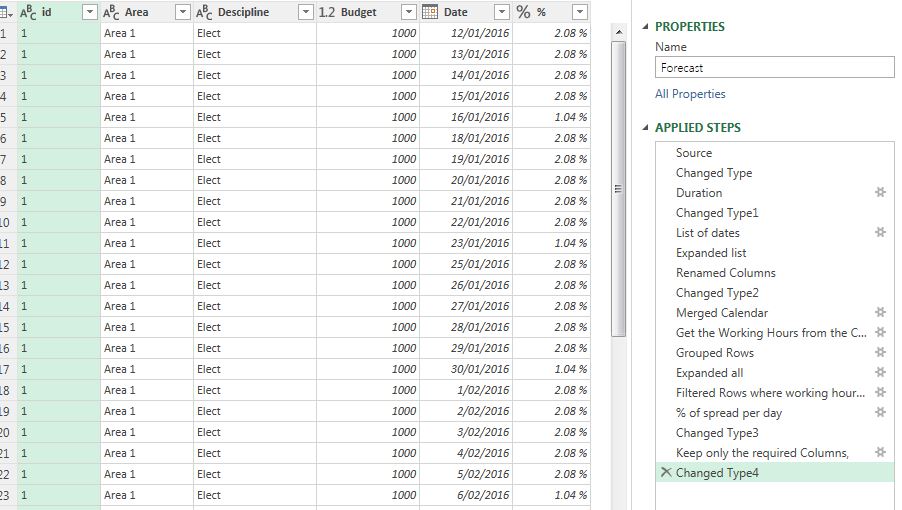

Alternative approach

As PowerBI cannot show all the point, one solution is to create a shape file that group the points into smaller areas, and again, it was trivial to be done in QGIS.

QGIS will group the point based on a filed you provide.

And volia

QGIS save the layer in ESRI shapefile, PowerBI require TopoJSON, I used the excellent tool mapshaper.org

Just make sure you import all the files not only .shp

Now the TopoJSON is ready to be loaded in PowerBI

Time to celebrate 🙂 not really there is a problem, I want to show different colour based on the status, if a sub-array is completed, I want it to show Yellow, if it is > 75 %, I want another colour and so on, the shape Map in PowerBI does not offer this option.

Fortunately Synoptic Panel has more options, it use SVG as a map format, which I got from mapshaper.org

Preparing the custom map is a one time operation, the color will change as per the construction progress, you need just to assign which measures you want to show

What’s Next

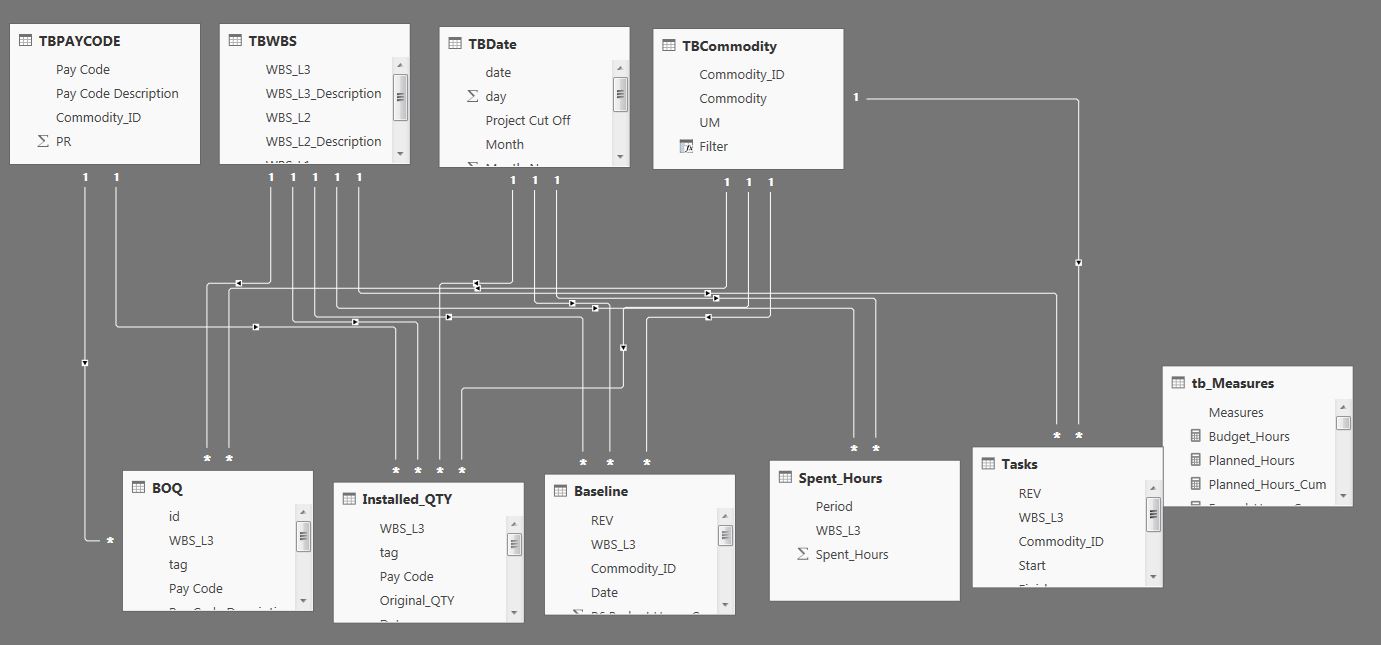

As long as your data model is properly built, you can do a lot of interesting stuff, you can filter by type of works (piling, Tracker installation, PV Modules), you can click on one sub-array and see in details what’s have been installed and what’s missing.