TL;DR: Shared a notebook showing the results of Iceberg metadata conversion to Delta in Onelake.

I’ve been following the evolution of Iceberg shortcuts to OneLake and I’m genuinely impressed with how the engineering team has invested so much energy into making it more robust, it is a good idea to read the documentation.

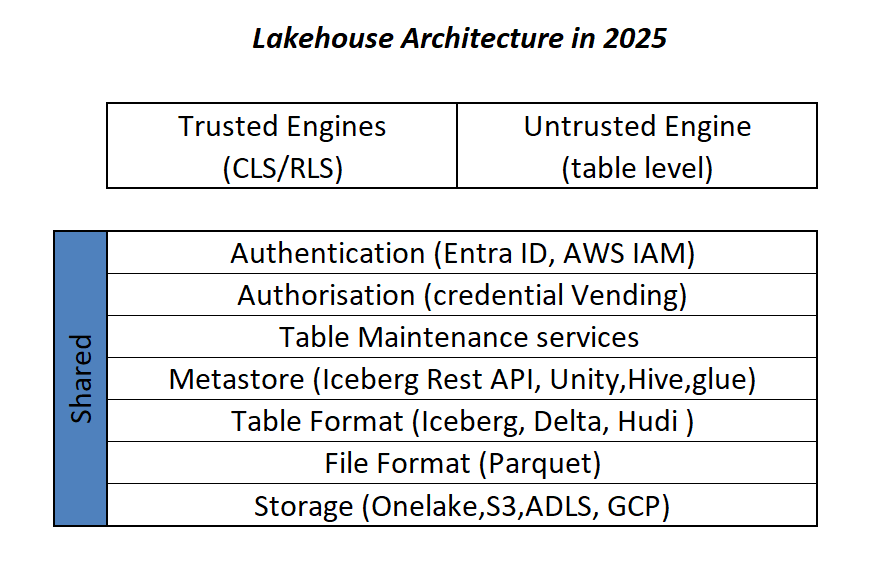

Essentially, XTable is used behind the scenes. Think of it as a translator for your open table format. Instead of requiring you to convert data from one format (like Iceberg) to another (like Delta) just to query them together, XTable allows you to access and interact with tables in different formats as if they were a single, unified table within OneLake—all without user intervention.

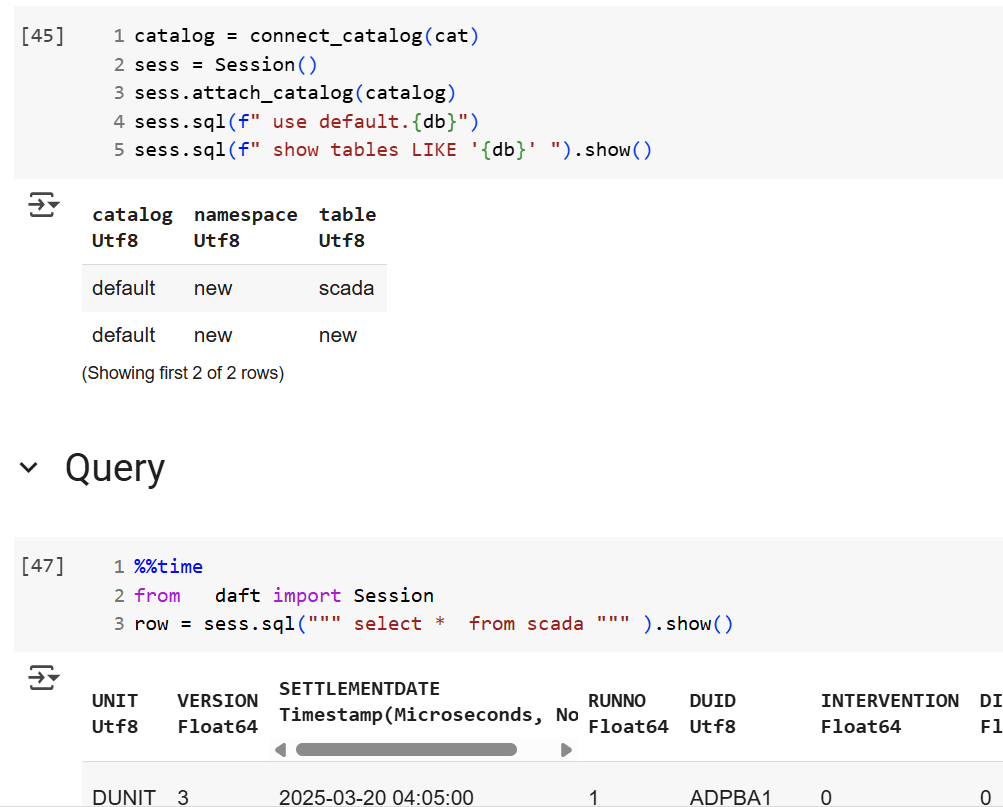

To truly put this to the test, I recently ran an experiment in a real production environment using my paid tenant—no sandboxes here! Here’s the logic from the Python notebook:

- Accessing data from an Iceberg table using a shortcut (sourced from Snowflake; the data can be stored anywhere—Azure, S3, GCP, or OneLake, You can use BigQuery too or any Iceberg writer).

- Inserting arbitrary data and performing delete operations.

- Counting the total rows using Snowflake.

- Counting the total rows using Fabric notebook as a Delta Table.

- Recording the record counts in a results table to track and visualize the comparison over time.

The results were quite awesome. I plotted the total record counts from both the Iceberg and Delta perspectives using two distinct colors and observed a perfect match. This confirms the seamless interoperability provided by XTable.

Lesson learned:

See the code snippet below for inserting data in Snowflake:

snow.execute(f'insert into ONELAKE.ICEBERG.scada select * from ONELAKE.AEMO.SCADARAW limit {limit};')

snow.execute('delete from ONELAKE.ICEBERG.scada where INITIALMW = 0')

snow.execute("SELECT SYSTEM$GET_ICEBERG_TABLE_INFORMATION('ONELAKE.iceberg.scada');")

In rare cases—especially when running multiple transactions at the same time—Snowflake may not instantly generate the metadata. To be 100% sure, run this SQL statement

SELECT SYSTEM$GET_ICEBERG_TABLE_INFORMATION('Table_name')to force the engine to write new Iceberg metadata. It’s an annoying aspect of Iceberg: every commit generates three files. That’s a bit excessive. Some engines prefer to group multiple commits to reduce the size of the metadata. Again, it’s rare—but it does happen.