Note : if you are using flat price, nothing to see here 🙂

TL; DR : This blog is about a particular use case, assume a customer is using on demand pricing for BigQuery ( 5 $/ TB scanned) and bought some BI Engine reservation (1 $/1 GB compressed/Day), Currently under heavy load, BI Engine fallback to BigQuery, I think it is a problematic behavior and the customer should have the option.

- Add the Queries to a Queue, slower but an order of magnitude cheaper

- At least have an option to define the behavior.

The Use Case

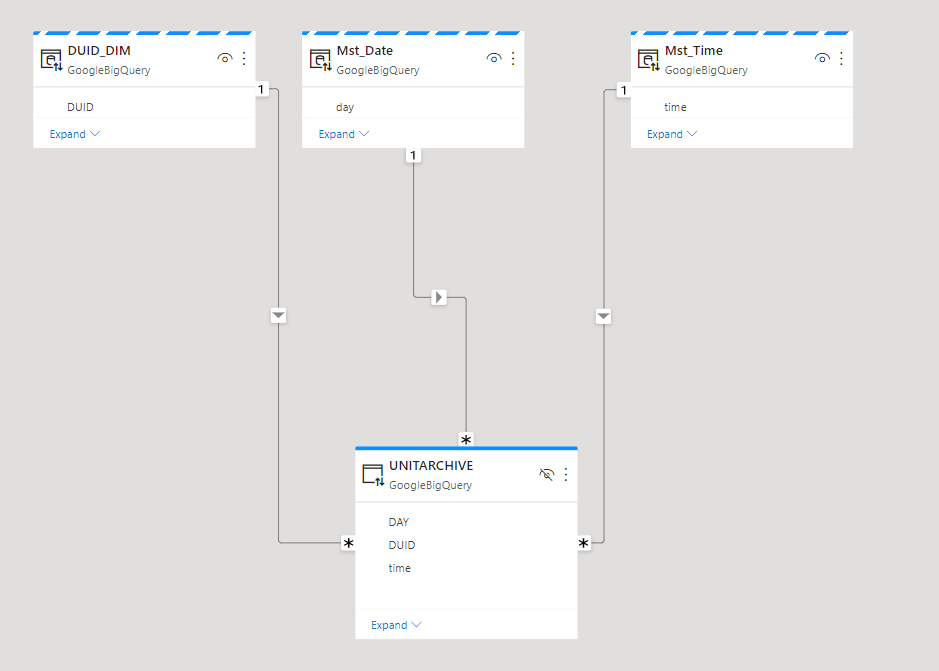

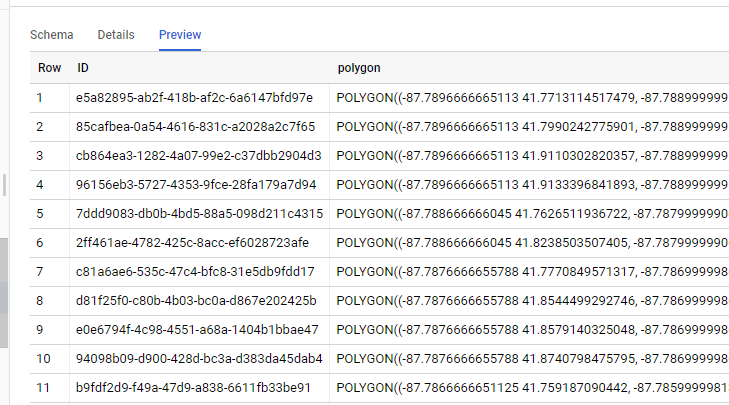

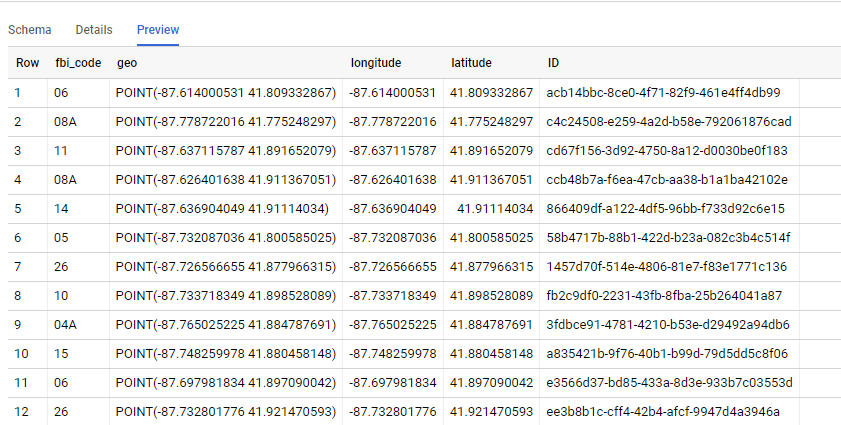

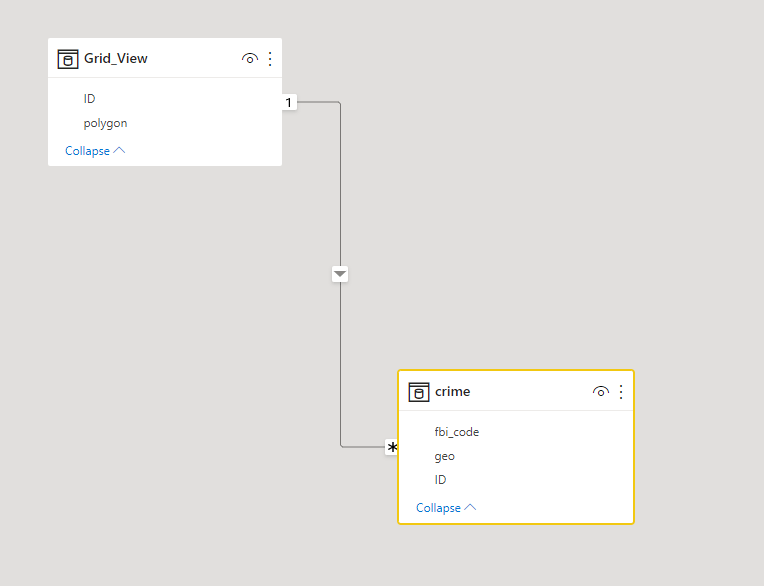

Let’s say you have a relatively small table and you expect around 20 concurrent users Querying it, using a Live connection from Tableau or Data Studio.

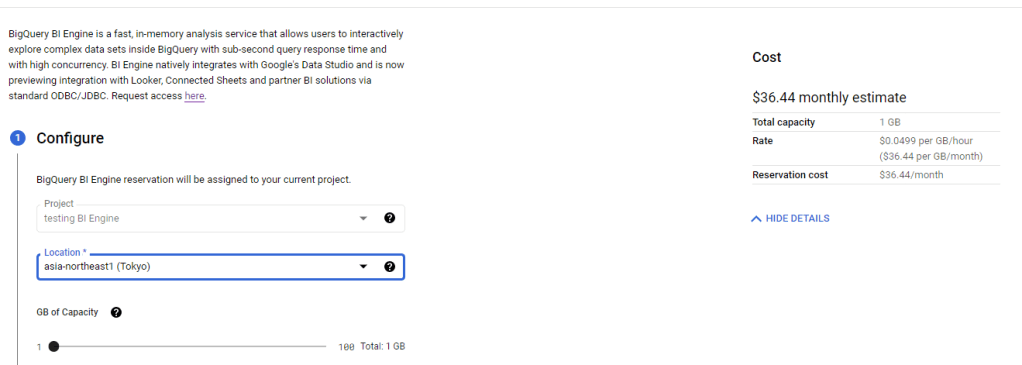

First let’s create 1 GB reservation. ( 36.44 $/Month)

Simulate Query Load

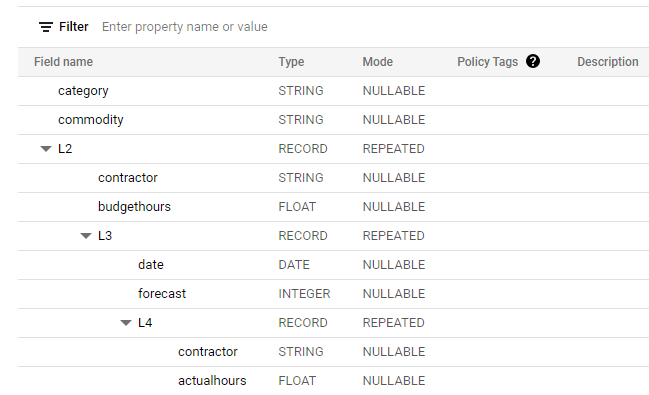

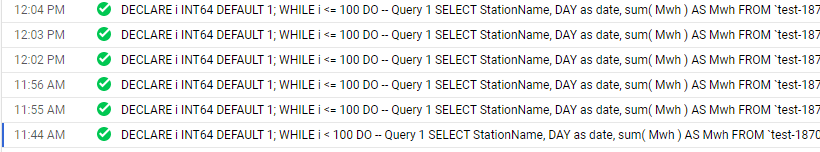

In a previous blog, I got complains that my benchmark is not reproducible, this time, I am using a different Approach, although it is the same base Table ( 74 Million records with new data every 5 minutes) , I am using a SQL Script that run 100 time, every time it generate 5 sequential Queries, and to avoid any cache the date filter is random, something like this

SELECT

StationName,

DAY as date,

sum( Mwh ) AS Mwh

FROM

`test-187010.ReportingDataset.Nem_View`

where DAY >= date_add(DATE "2016-12-25",INTERVAL cast(floor(rand()*100)+1 as INT64) DAY)

GROUP BY

1,

2

ORDER BY

date ASC

Limit 1000

The data is public you can test it yourself, notice it is saved in Tokyo Region, BI Engine should be in the same Region.

The test Load is simple, run the first script and then slowly increasing the number of script running in parallel, the idea is to simulate an increase in concurrency

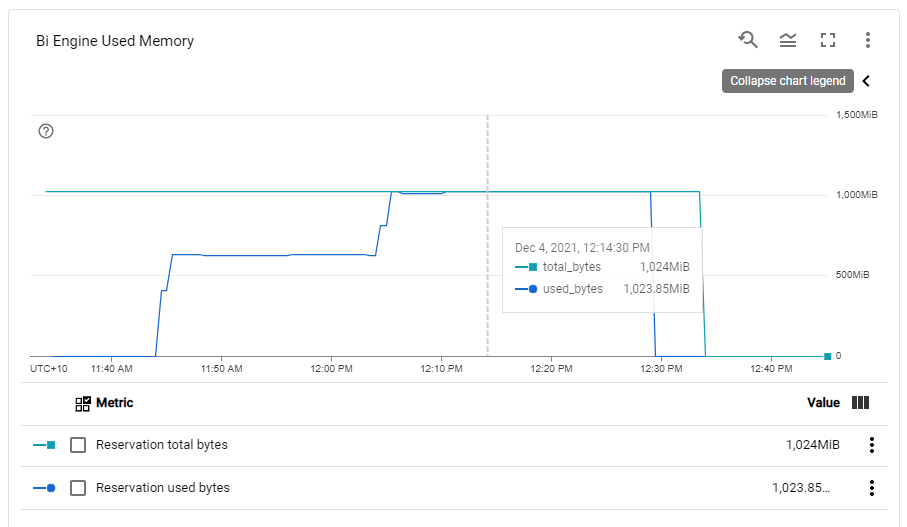

BI Engine consume more memory when the load increase, Very Nice 🙂

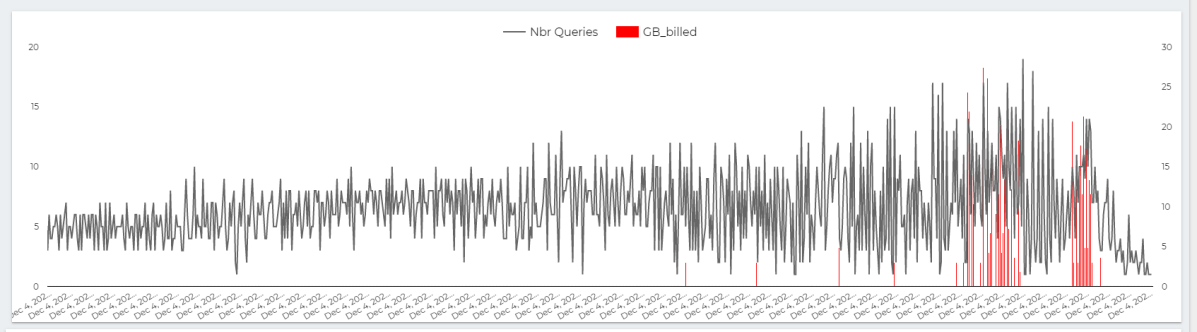

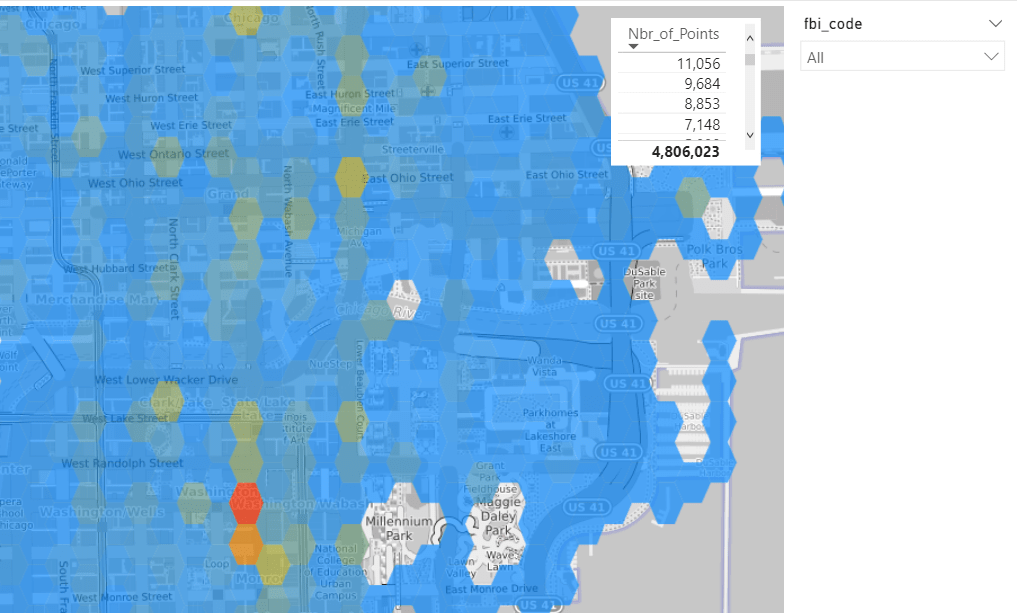

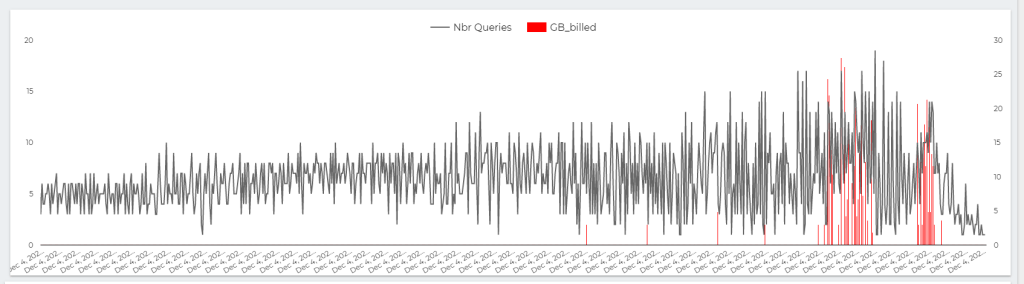

This chart show the number of Queries per second , The Red Line is GB Billed, The red Line is something that worry me a lot.

The whole value proposition of BI engine is the cost, yes it is fast and all but as far as I am concerned, it make Interactive Live BI workload very cost effective, on demanding Pricing is useful for ETL workload, not serving Queries.

Note : you can’t use BI Engine for transformation, saving Query results to a permanent table is not supported.

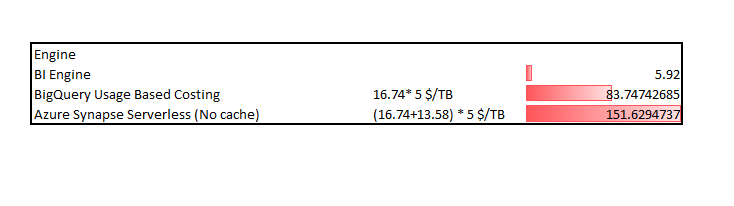

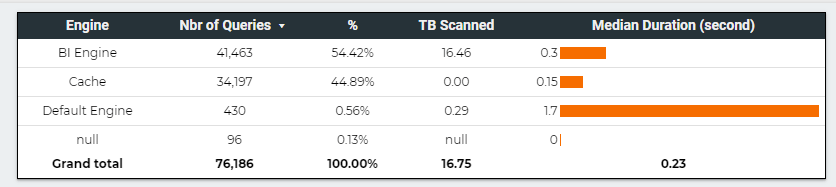

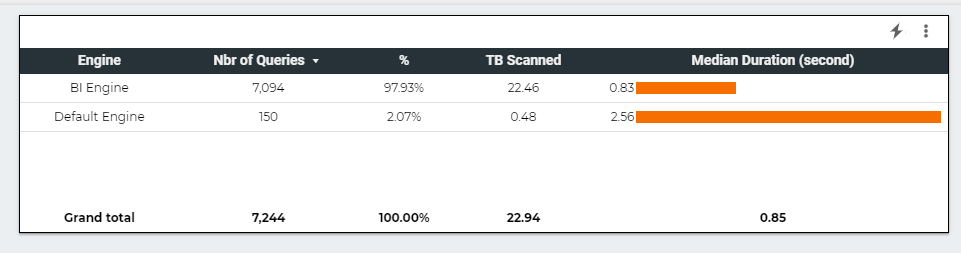

Here is The Workload Breakdown.

let’s admit the obvious here, BI Engine scanned 22 TB of data in less than an Hour for the cost of 5 cents, it handled the load gracefully, till we start getting 17 concurrent Queries ( your mileage will vary based on the query complexity, volume of data etc etc )

but when BI Engine got overloaded with Queries, it fall back to BigQuery on demanding pricing which in no time consumed the daily Quota ( 500 GB) for a cost of 2.5 $. ( always set up a quota per day)

The Math is very simple, 22 TB for 5 cents versus 0.5 TB for 2.5 $

What‘s the solution

I really would like that BigQuery behavior change, if BI engine is overload, just added the Queries to a Queue to slow down things, or at least make this behavior optional, let the customer decide, if we need more concurrency we can by more memory, but don’t make the decision on our behalf.

PowerBI Vertipaq, which is in the same tech category, handle it differently, if you reach a limit, the Engine will simply throttle the Queries, I think BI Engine should behave the same 🙂

Takeway

For the Previous workload, and to support high level concurrency, and keep a Low cost, the Reserved memory Should be 2 GB, The Good news, the Product Team is working on Auto Scaling, No ETA though.

A vote on this feature request will be appreciated.