When I start that AEMO Dashboard , I had a hard time dealing with PowerBI gateway, it is just setting there, my laptop has to be online whenever I need to schedule a refresh, it just annoyed me, and I could not understand how cloud based data needs on-premises gateway anyway, obviously later I learned that strictly speaking it was not required, there was just undocumented feature to get away of it ( the trick is in the first blog, thanks @Rad_Reza ).

but before I was aware of that, I went to some rabbit holes dealing with new tools that are out of my comfort zone, and I think they are worth sharing.

my first thought was instead of accessing the data directly from the website, let’s instead copy the data to a cloud storage then read it from there, I have already a google storage account, it is very generous with a free 5GB storage, my data is not big around 2 GB of zipped csv..

first setback, there is no native connector to Google storage and even if there was ,we have something called egress fees, in a nutshell, cloud storage is really low cost, loading data is free, but getting your data out is not free, unless it is for the same provider and the same region, most of the cloud vendors use the same model, as my data will be processed in PowerBI, the clear choice is azure blob storage

Azure Blob Storage

the setup is very simple I used the following options :

- the same region as my PowerBI region ( otherwise your pay the egress fees)

- for replication I used LRS

as PowerBI don’t support data lake V2, I used the classical Blob Storage.

Let’s move some data

anyway, now I have my storage, I need a tool to copy the files from here http://nemweb.com.au/Reports/Current/Daily_Reports/ to my storage account.

Azure data factory

When you want to copy data, the official tool in azure is data factory, I tried to play around with copy activities, it is straightforward, my first attempt did work and it was fast , actually too fast 😊, no zip was transferred but rather an HTML

probably copy data just handle this case just fine, but when you use your own credit card on a cloud tool and you don’t know what you are doing, better stay back and take the time to understand how it works, I deleted the new created resources and went to the second option, Python !!!

PYTHON

Normally I go with R but blob storage has no API for R, I have very limited experience with Python , just using it for the excellent package altair , let’s try something new.

I was very pleasantly surprised, the amount of documentation for Python is just amazing, actually once I asked a question on stackoverflow and got a very succinct answer in less than a minute, no one was judgemental or downvoted my question ( the question was very basic). the only drawback is that sometimes the code works well for python 2, but I am using Python 3 anyway enough talking let’s show some pseudo code.

step 1 : get a list of files name from the web site

url = “web address where the files are saved”

result = urlopen(url).read().decode(‘utf-8’)

pattern = re.compile(r'[\w.]*.zip’)

here is a snapshot of the results, the full list is 60 items.

[‘PUBLIC_DAILY_201904260000_20190427040503.zip’, ‘PUBLIC_DAILY_201904260000_20190427040503.zip’, ‘PUBLIC_DAILY_201904270000_20190428040502.zip’]

step 2 : get a list of files name from the blob storage

in the first run, the list is empty as we did not load anything yet, I load a couple of files manually just to test if it is working, the API for blob storage are very simple, you only need to provide your storage account name and key and I love that.

block_blob_service = BlockBlobService(account_name=’’xxxxxx’, account_key=’xxxxxx’’)

generator = block_blob_service.list_blobs(container_name,prefix=”current/”)

the same you get a list of names.

step 3 : get a list of files that exist in web and don’t exist in the storage

the code in Python is very simple, it is simply substraction of two sets, and then you converted to a list using function list ( i get why people like Python)

files_to_upload = list(set(List_website)-set(list_azure))

step 4 : Upload the new files to Azure Blob Storage

the same here, the Azure API are very simple and clear, I had only when issues, when the script upload in a loop, it does not wait until the transfer is completed before jumping to the next file, my workaround was just to use sleep ( sync is supported but not in this scenario where the input is from an url), anyone i got the answer in stackoverflow

for x in files_to_upload:

block_blob_service.copy_blob(container_name,x,url+x)

copy_status = block_blob_service.get_blob_properties(container_name,x)

#use code below to check the copy status, if it’s completed or not.while(str(copy_status.properties.copy.status) != “success”):

copy_status = block_blob_service.get_blob_properties(container_name,x)

basically wait till the status of the copy is success before moving to the next item, ( did I say I love Python syntax)

the full script is here

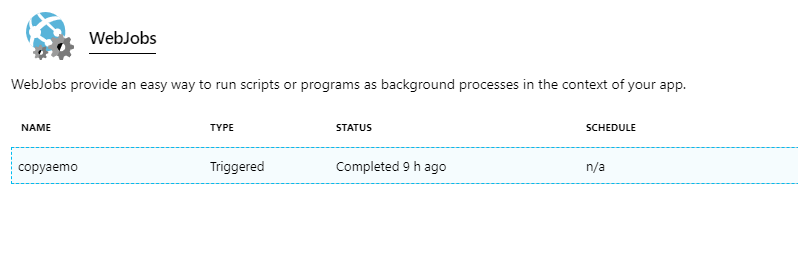

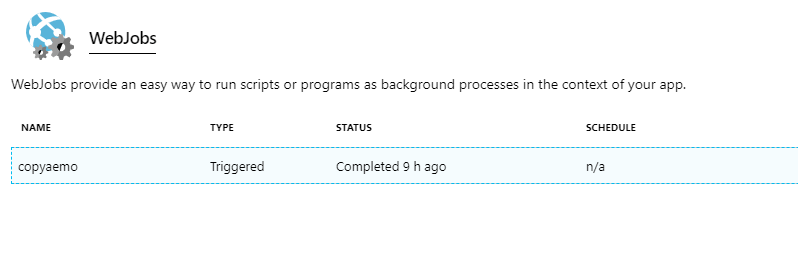

WebJobs; a Free Job Schedule

ok, so we do have a script that works, now we need to run it on a schedule, once per day at 5 AM, keep in mind the whole purpose of this workflow is not to use on-premise software, I just need to find the service that runs a script on the cloud on a schedule, as I am already on azure, let’s stick in that ecosystem.

and it is a personal project, I prefer a free solution, my script runs only every 24 hours, for a couple of minutes, a quick google search and i find this little treasure, I will not repeat here the steps, WebJobs is a service that just do that.

note that the package azure-blob-storage is not a base package in Python you need to install first in WebJobs, the schedule functionality is very flexible as it is using CRON, I wish we had something like that in PowerBI Dataflows.

End results

Every day at exactly 5 AM, a new file show up in the azure storage, although I don’t need those files, I am using now another approach to load the files directly in PowerBI, it is important to build a data lake ( yes, I just said that, I am just joke, data lake is folder in the cloud where you save the raw files, nothing more), storage is cheap but most importantly the requirement may change, I may need to report on another dimension and it is crucial to keep the raw unprocessed data.

Take away

- Python is awesome

- Azure API for python are straightforward

- Azure is awesome.

- Be careful of Egress fees

- CRON is awesome wish it was supported in PowerBI dataflows.

- Wish PowerBI dataflows could save a raw file, Powerquery is amazing but it does not copy raw files.

- Wish WebJobs add support to R

One thought on “Using WebJobs to scrap public website and copy data to Azure blob storage.”