If you’ve ever worked with Delta tables, for example using delta_rs, you know that writing to one is as simple as passing the URL and credentials. It doesn’t matter whether the URL points to OneLake, S3, Google Cloud, or any other major object store — the process works seamlessly. The same applies whether you’re writing data using Spark or even working with Iceberg tables.

However, things get complicated when you try to list a bucket or copy a file. That’s when the chaos sets in. There is no standard method for these tasks, and every vendor has its own library. For instance, if you have a piece of code that works with S3, and you want to replicate it in Azure, you’ll need to rewrite it from scratch.

This is where OpenDal comes in. OpenDal is an open-source library written in Rust (with Python bindings) that aims to simplify this problem. It provides a unified library to abstract all object store primitives, offering the same API for file management across multiple platforms (or “unstructured data” if you want to sound smart). While the library has broader capabilities, I’ll focus on this particular aspect for now.

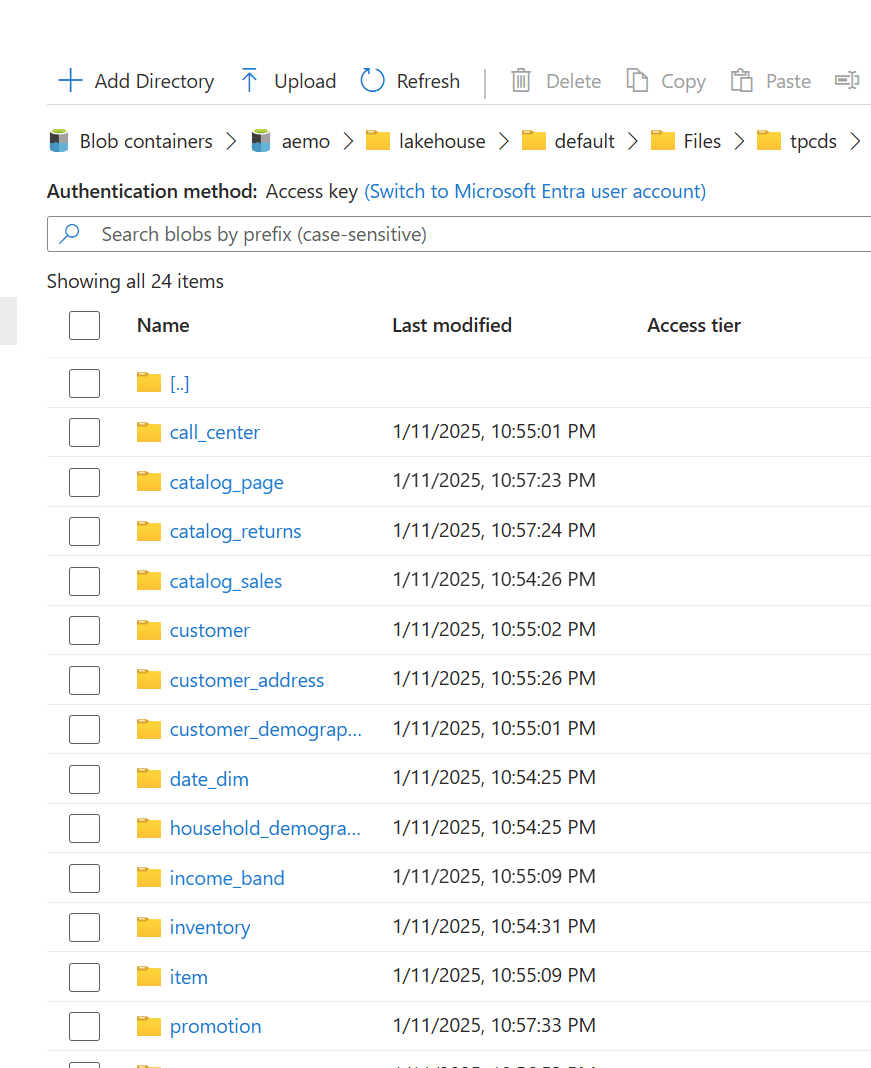

I tested OpenDal with Azure ADLS Gen2, OneLake, and Cloudflare R2. To do so, I created a small Python notebook (with the help of ChatGPT) that syncs any local folder to remote storage. It works both ways: if you add a file to the remote storage, it gets replicated locally as well.

Currently, there’s a limitation: OpenDal doesn’t work with OneLake yet, as it doesn’t support OAuth tokens, and SAS tokens have a limited functionality ( it is by design, you can’t list a container for example from OneLake)

You can download the notebook here

Here’s an example of how it works:

you need just to define your object store and the rest is the same, list, create folder, copy, write etc all the same !!! this is really a very good job

you can see the presentation but it is too technical to my taste 🙂 https://www.youtube.com/watch?v=eVMIzY1K5iQ

Local folder :

Cloudflare R2: